Using ETL

The Deep Learning module has a dependency on ETL. Ensure the ETL module is available and enabled in Ambience. ETL chainset can be created to utilise the models upload.

Below are some examples on how ETL is used to forecast and predict using the different types of models.

- DL4J Multi Layer Network

- DL4J Computation Graph

- Keras Functional

- Keras Sequential

- Sentiment

- Object Detection YOLO V3

DL4J Multi Layer Network / Keras Sequential Model

The DL4J Multi Layer Network model (for Java) is equivalent to Keras Sequential model (for Python).

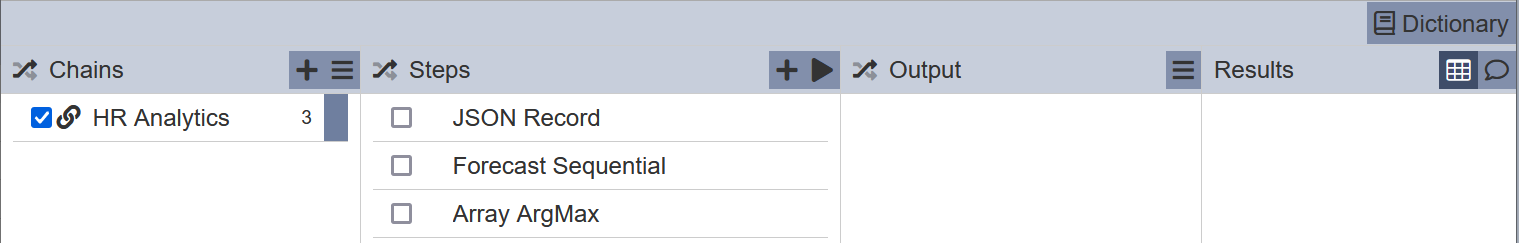

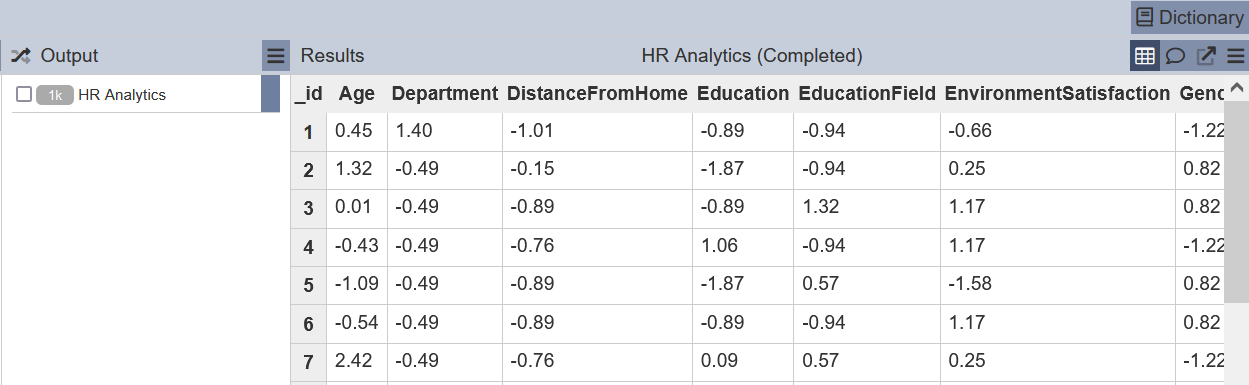

In this example, the “HR Analytics” ETL chainset performs prediction using the input data.

This ETL chainset performs the following:

- First step reads in JSON records

- Second step forecasts using the input data (in array form)

- Third step flatten the array and displays the forecast result

Run the steps and the ETL chainset performs the prediction.

DL4J Computation Graph / Keras Functional Model

The DL4J Computation Graph model (for Java) is equivalent to Keras Functional model (for Python).

TODO

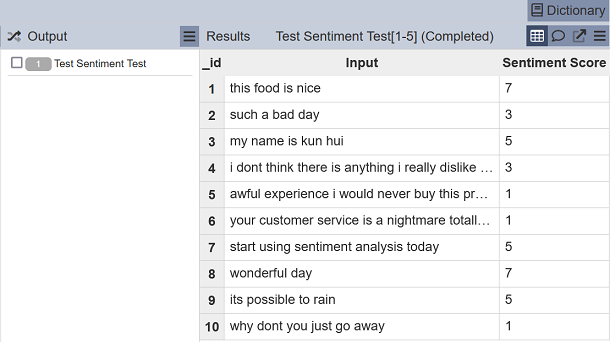

Sentiment Model

The forcast sentiment or sentiment analysis allows you to determine whether a piece of string sentence input is positive or negative. The result ranges from 1 being very negative to 10 being very positive.

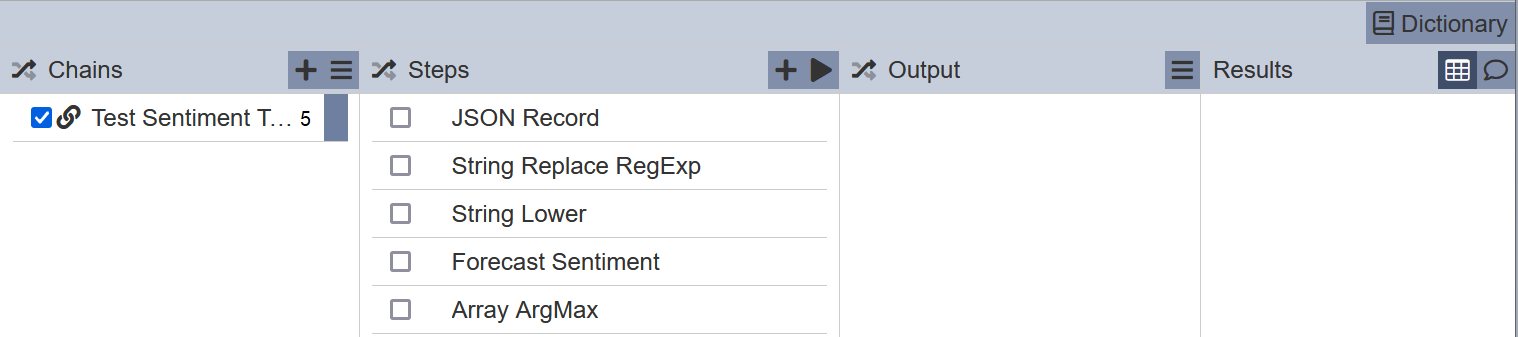

In this example, the “Sentiment Test” ETL chainset forecasts the sentiment score of the input data, in this case JSON records.

This ETL chainset performs the following:

- First step reads in JSON records

- Second step removes all punctuations and retains alphanumeric characters

- Third step places all characters into lower case

- Fourth step forecast the sentiment (in array form)

- Fifth step flattens the array and displays the sentiment score

Run the steps and the ETL chainset forecasts the sentiment score.

Object Detection YOLO V3 Model

This model detects object powered by “YOLO V3” models that cover 80 classes. It takes bytes of images as input and performs object detection and putput into object of arrays.

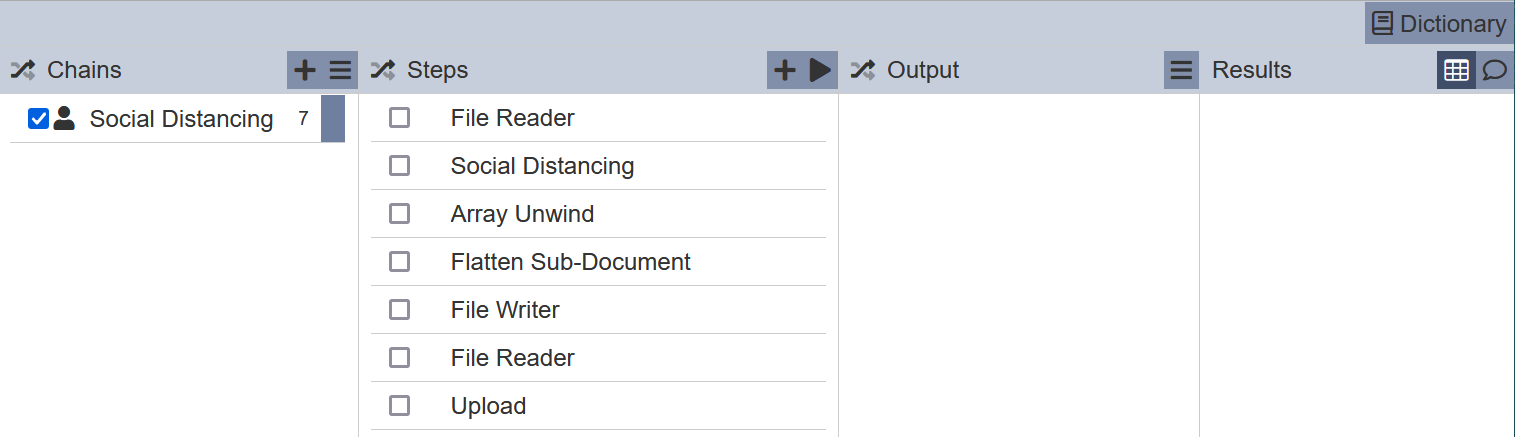

In the example below, an “Social Distancing” ETL chainset is created to detect distance between objects using the ValidYOLO V3 model uploaded in the Deep Learning module.

This ETL chainset performs the following:

- First step reads in an input picture file placed in the Ambience “data/in” folder

- Second step defines the model to be used and the necessary parameters

- Third step unwinds the result array

- Fourth step flattens the document

- Fifth step writes the output file

- Sixth step read in the output file

- Seventh step uploads the output file to Uploads module

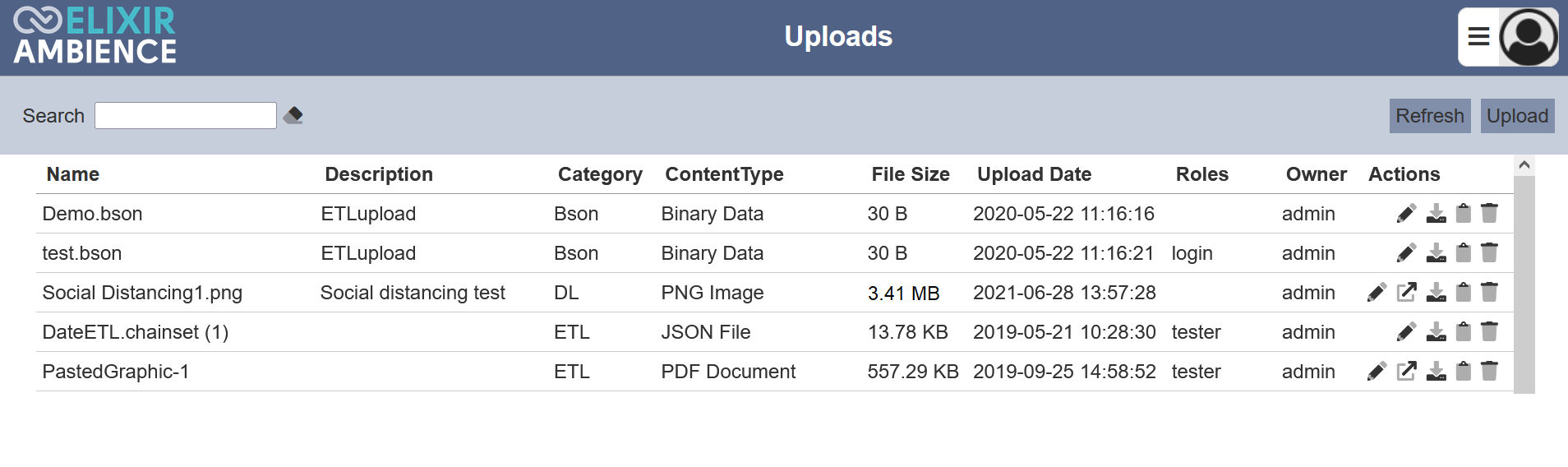

Run the steps and view the output file in the Uploads page.

In the Upload Management page, click on the ![]() “Copy Link” icon under the “Actions” column to copy the URL of the output file. Open a browser tab and paste the link into the address bar. Hit the “Enter” key and the output file will be displayed. You can also download the output file by clicking on the

“Copy Link” icon under the “Actions” column to copy the URL of the output file. Open a browser tab and paste the link into the address bar. Hit the “Enter” key and the output file will be displayed. You can also download the output file by clicking on the ![]() “Download” icon under the “Actions” column.

“Download” icon under the “Actions” column.

The model is able to detect objects and the distance between objects, highlighting those who violate the safe distance in red.